“No man is an island.” – John Donne

This is from a famous poem (Devotions upon Emergent Occasions) written by John Donne in 1624. It was meant to convey the connected nature of mankind – that we are each part of a larger whole.

What has this got to do with Cloud Readiness? Everything. Clouds are about hosting applications and in most Enterprises, application flows and interdependencies are poorly understood and seldom documented.

Legacy applications are rarely stand-alone systems. In Enterprises, these applications were built over a period of years and are highly connected and interdependent. Mapping a set of application flows can be complicated and the resulting diagrams can look like a Rube Goldberg device.

Separately there is another issue – Cloud connectivity. A CIO once asked me about a performance problem one of his teams was having with Amazon: ‘I have an internet connection and it’s not saturated, why is Amazon blaming our network?’

Could it be the network?

Yes, because sufficient bandwidth is not enough. Even basic connectivity requires analysis. His company was connected to a small Regional Provider, they in turn connected to a pair of larger providers who connected to Tier 1 providers who were connected to Amazon.

Do you see the issue? They have Service Level Agreements (SLAs) only with the provider they were paying for connectivity – the small Regional Provider. The chain of connectivity from that point to Amazon was out of their control.

What else could it be?

A problem with application flows can look like a network issue. To explain, consider that as we migrated to virtualization and containerization within the enterprise, the successful projects were often written for the new environment. But for a moment let’s look at the failures.

Failed projects took a piece of an application and virtualized it separate from the rest of the components. This is not horrible in of itself, but what if the Virtualized environment is in a new Data Center geographically remote from the rest of the components housed in a Legacy Data Center?

The failed implementations required packet flows between the old and new environments that were previously collocated. Depending on the distance and numbers this could add up.

In one case the issue was performance in the (partially) virtualized system. The application was several seconds slower and this supported an online Web based system. When I pointed out the latency issue – it was initially dismissed. After all the Data Centers involved were only 40ms apart.

However, detailed investigations showed that the number of packets involved (well over 100) was far larger than initially thought and that the data transfer was using TCP. The TCP protocol requires acknowledgements (TCP sends a window, then waits on an acknowledgement before sending the next window or resending the current). This can be exacerbated by poor MTU management, link quality issues and other errors.

Because the application was only partially virtualized the packet flow was going in and out of the DC where the virtualized system resided. This ‘trombone effect’ in the flow was killing overall performance.

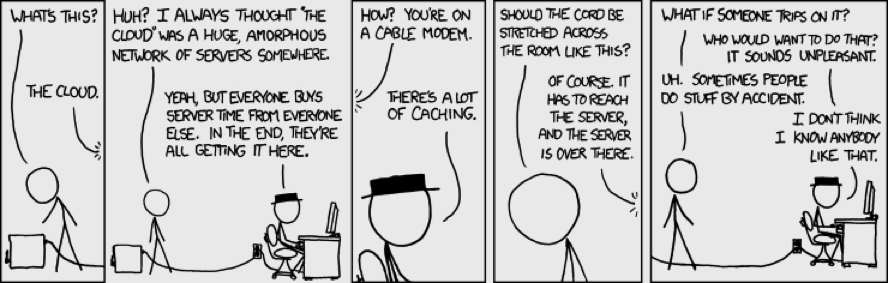

The moral of the story is when we discuss moving items to the Cloud we must remember that, while the term is an abstraction, the actual systems supporting our apps live on real physical servers and infrastructure somewhere.

Where that ‘somewhere’ is located and how we connect to it are important. These are details that cannot be abstracted.

Source: https://imgs.xkcd.com/comics/the_cloud.png

If we solved the connectivity issues with the Cloud – what could be moved there on Day 1?

- Stand-alone apps

- Intact Application Suites

- Software as a Service (SaaS) offerings

Stand-alone Applications

These are special purpose applications with no interdependence on other Enterprise applications. The exception could be one-time flows such as use of a Single-Sign-On system for credential management, but the rest of user’s application flow should occur entirely within the cloud.

Intact Application Suites

These are as the name implies a set of applications that works as a unit. Think of a typical financial management suite – General Ledger, Accounts Receivable and Accounts Payable. Each of these major systems may itself be made up of components. For example the AP system may have a check writing system as well as an application that supports connectivity to Banking payment systems.

An Intact system would be defined as a grouping of these component applications that would work together as a unit and collectively look and appear as a Stand-alone application.

Software as a Service

Some SaaS Applications are run in AWS, Azure or Oracle Cloud Infrastructure, but some SaaS Applications such as Salesforce run in their own ‘Cloud’ like infrastructure. Each of these is interconnected with a variety of Mobile and Internet providers. The result is that many companies run systems such as Salesforce separate from their internal IT Infrastructure.

Many examples of this exist. Firms are taking their internal ERP and CRM systems offline in favor of NetSuite, Email is moved to Office 365 or Google’s GMAIL, etc.

There are also app providers who develop and host apps on general purpose cloud platforms such as Amazon’s AWS offering.

This form of Cloud could be thought of as a Hosted Application model. It permits companies to start removing the internal apps that are not core to its business (likely candidates are payroll, HR, CRM, ERP and even email).

So how do I know if I am Cloud Ready?

You need to assess your systems.

An ‘Initial Cloud Readiness Assessment’ would look at the following:

- Internet, Cloud and SaaS Connectivity

- Bandwidth

- Latency

- Peering Relationships

- QoS

- Internal Connectivity

- Bandwidth

- Latency

- QoS

This would be sufficient for analyzing and remediating deployment of SaaS and Stand-Alone applications. To get past this stage would require an ‘Application Cloud Readiness Assessment’ which would need to understand the full mapping of all the flows between all components and subcomponents in an Application Suite.

Imagine a large complex legacy app that migrates 99% of its components to the Cloud. Sounds perfect? The 1 percent example might be good if it was a small part of the data flow, but not if it was a Client Data Base that required significant flows at several stages of the process.

This subject is very complex and is often problematic, because Application teams are spread across many constituencies and, even when firms have them, Enterprise Architects rarely have the technical networking skills needed to look at the whole picture. Having the right partner, who can help you navigate your way through all of the choices is key. To Learn more contact us about the assessments we can perform to address any concerns and improve your network.

Further posts in this series will explore these subjects and illustrate solutions.