In a prior blog, I covered Equinix Cloud Exchange (ECX) Fabric. If you’re asking, “what’s an Equinix?”, please see the prior blog.

In that blog, we saw that Equinix can provide agile virtualized connectivity between a company’s presence at a suitable Equinix facility, to not only Cloud Service Providers (CSPs) like Amazon and Azure, but to the CSP’s presence at another Equinix facility, or even to another company with Equinix presence. Assuming the other company permits the connection. Coming soon: global virtual connections between ECX sites.

What we did not cover in that blog was how you establish your presence at an Equinix facility and what this might mean for your WAN.

Brief History of the WAN

Historically, the various iterations of the WAN became centralized with the datacenter as a hub. As Cloud adoption increases, that WAN design model needs to change. The problem is that back-hauling traffic bound for a CSP or SaaS provider through your datacenter and firewall / border complex adds latency, slowing down Cloud and SaaS application performance. Direct from the branch to Internet, Cloud, or SaaS provider is the fastest, but doing that has security challenges.

Unfortunately, there’s no vendor I know of that is stellar at SD-WAN (in the automation of application aware edge routing sense), encrypted VPN, DNS whitelisting to route SaaS traffic directly, and all the edge security mechanisms Security teams like to impose at the Internet edge.

That’s a lot to code! So, I’m not holding my breath for all that quite yet. (Reality versus Marketing)

That means that branch-to-Internet requires several devices, even if you use a SD-WAN approach. Managing a “pile o’ gear” at every branch site is costly and not easy. One automated tool to manage them all (aka “SD-Branch”) – good luck with that!

Another approach is to regionalize your WAN, connecting branches to a relatively close hub site with the requisite security apparatus. This approach has fewer boxes, is more manageable, and has a lower device cost. You just need a place to put the devices, and it preferably should have great Internet connectivity.

All that is why, to varying degrees, some SD-WAN vendors are pitching a regional WAN strategy. Haul your traffic “part way” to a regional site, security scan it, and then do high-speed backbone from there to Cloud, SaaS, or other regional sites. This allows the SD-WAN vendor to do what they’re good at, and security tools of your choice complement what the SD-WAN device does.

Here’s where you have a choice. You can do it with SD-WAN and VPN over whatever transport you can scrounge up / afford / choose, or you can do it on huge provider backbone links (e.g. Equinix and carriers with Equinix presence).

You might consider cloud-centric WAN, but then you’re paying the Cloud Provider for egress traffic, plus you’re doing VPN over Internet: encryption = costly speed bump. (Pete’s recent rule of thumb: IPsec VPN / SD-WAN is generally fine for small to medium size office speeds, but gets costly for bigger offices and datacenters, where private WAN/MAN might fit well.)

About Performance Hub

Performance Hub is an Equinix-based design concept that can provide agility and scaling in connecting to CSPs and SaaS sites, WAN and Internet providers, and business partners, along with lower circuit costs. It can provide more predictable bandwidth, control latency and quality of connection, and meet high throughput needs.

The basic idea is fairly simple: put your regional hubs in Equinix facilities. We’ll see later that there can be some interesting variations on that theme.

Performance Hub gives you a way to have a regionalized global network edge (WAN + Internet); focusing on regional hubs and regional (distributed) security, reducing the latency of backhaul through the datacenter. This approach avoids having to manage security devices at every branch site. It also allows you to regionalize data that you don’t want to put into the cloud, placing it “closer to the cloud” while keeping it under your control.

In addition, having a well-connected Equinix hub means you can interconnect multiple cloud instances there, rather than proliferating virtual routers and firewalls into cloud instances. (There are pros and cons to each of these approaches, but that is a big enough topic to belong in a separate blog.)

To sum up, regional Performance Hub as WAN de-emphasizes a datacenter-centric WAN, putting the regional hub sites in effect in the middle, between the datacenter and the cloud. Using ECX Fabric for regional and global connectivity makes it agile.

Understanding Performance Hub

The basic concept to Performance Hub is to contract for and place equipment at multiple regional Equinix facilities, and connect your sites, datacenters, and/or WAN to the best/nearest Performance Hub. That gives the region access to what is, in effect, “shared agility”.

Doing this regionally keeps the access circuits local and keeps latency low. Using your own equipment rather than virtual SD-WAN devices / server-based devices provides you with ASIC-based forwarding – hardware forwarding high performance. And if you want, you might use SD-WAN VPN for small offices’ access to the regional Performance Hub.

Some SD-WAN designs involve a similar regional approach, using SD-WAN VPN rather than circuits to connect to managed regional hubs, probably in a colocation space. If your underlying transport is the Internet, encryption will slow you down, and the transport may have occasional quality “brownouts”. SD-WAN is supposed to adjust and shift traffic, but the speed with which it does so likely varies between vendors. So it may be useful for a large number of small offices, keeping the cost down, while leveraging big pipes for the WAN connections between regional hubs, and Internet or CSP access.

Designing around Equinix Performance Hub gets you a corporate device in key regional locations that are extremely well-connected, with local presence of a large number of Internet and WAN/MAN connectivity providers, and with very high-speed connections to the sites. According to Equinix, the presence of all the connectivity providers leads to provider cost competitiveness. It also means Equinix can typically patch your Performance Hub through to a circuit or connectivity provider in under 48 hours (let’s call that “fairly agile”).

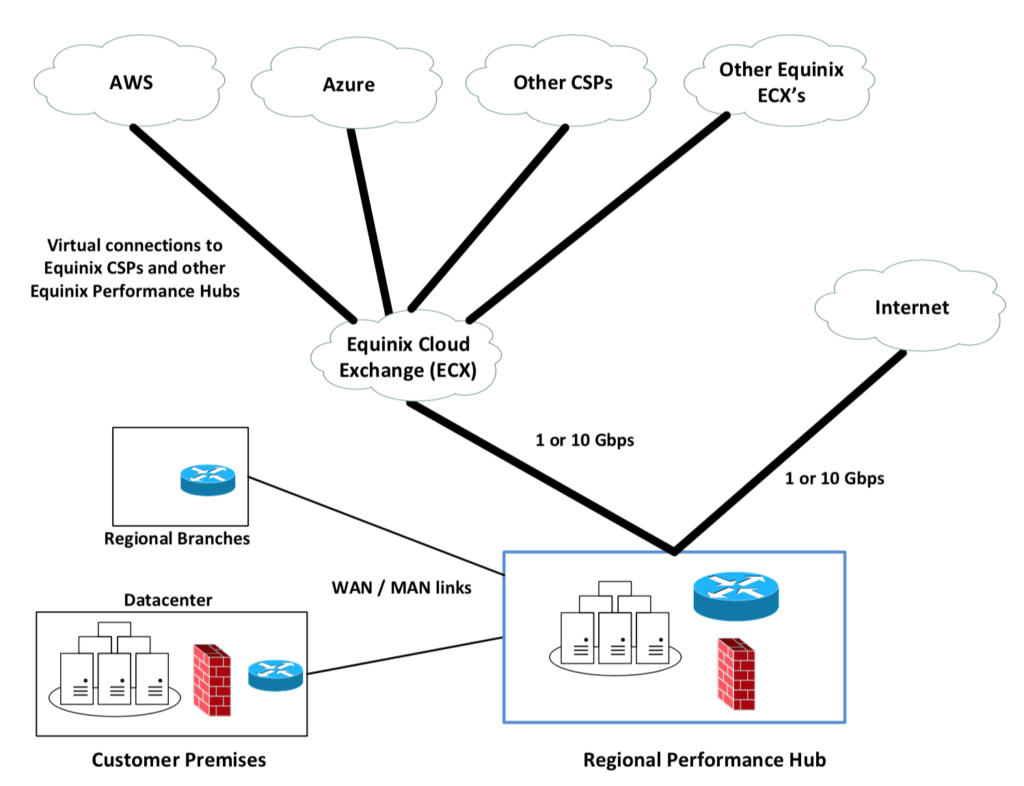

The following diagram shows what a regional Performance Hub might look like.

Building a Performance Hub

Earlier, I said Performance Hub is a concept. It means having some device footprint at Equinix, and (probably) a link to your datacenter or WAN. Yes, you could do this sort of thing with other colocation providers, e.g. if you don’t need the Equinix amenities, reliability and/or prices.

There’s no one right way to build or use a Performance Hub, seeing as it comes down to a WAN/MAN link to something at Equinix. “Something” might turn out to be a L3 switch for low cost speed. “Something” could also turn out to be that, plus router, plus firewall, maybe other security devices, maybe server and storage or hyper-converged equipment.

If you want to control and manage the “something”, then you need to get rack space from Equinix, buy hardware, and build it out. The alternative is to buy access from a managed service provider (MSP), which means either some rack space or sharing their equipment (L3 switch, maybe a router, maybe a firewall). Using shared hardware might be the fastest way to get started: the MSP probably already has the shared equipment in place, and just has to turn up your service. That could be a way to try before putting your own equipment onsite.

Summary

Performance Hub:

- Provides lower latency than datacenter back-haul

- Allows a regionalized edge security approach

- Positions yourself for agility

- Great locations globally

- Highly reliable and well-connected datacenters

- Low cost connectivity

- Strong datacenter security

- Regionalized data positioning under your control

Disclaimer

NetCraftsmen does some work for / with Equinix, so this blog may not represent completely neutral opinions.

References

- My prior blogs about SD-WAN

- Equinix Interconnection Oriented Architecture (theme: the future is not just cloud but connections to partners, IOT devices, etc.)

Comments

Comments are welcome, both in agreement or constructive disagreement about the above. I enjoy hearing from readers and carrying on deeper discussion via comments. Thanks in advance!

—————-

Hashtags: #Equinix #WAN #cloud #router

Twitter: @pjwelcher

NetCraftsmen Services

Did you know that NetCraftsmen does network /datacenter / security / collaboration design / design review? Or that we have deep UC&C experts on staff, including @ucguerilla? For more information, contact us at [email protected].