This blog attempts to briefly cover container basics — a subsequent blog may summarize container networking. The goal is to entice you into looking at containers more closely. This re-creates part of a learning journey I’m on, complete with some good links I found — ahem, a curated reading list.

The following represents my attempt to summarize some of the basics of containers, including why they matter.

Container Basics

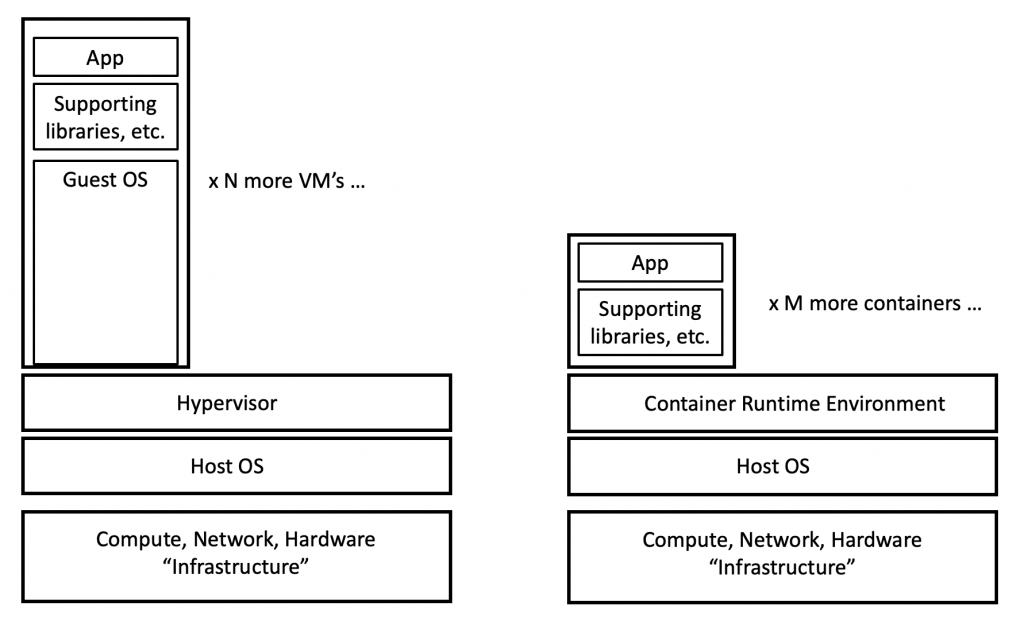

It may help to start with “what is a virtual machine”. Virtual machines run on a hypervisor such as VMware ESXi. The hypervisor virtualizes the hardware it runs on, allowing multiple virtual machines, potentially running different guest Operating Systems, to share the underlying hardware in a secure way. Each VM is a virtual server running some supported Operating System plus installed applications.

VMs are isolated, connecting only at the logical network level. That means that processes running on one VM do not inherently have the ability to interact directly with those on another. The VMs would have to use the logical networking just as two servers would interact across a network. A problem in one VM is fairly isolated from the other VMs running on a host computer. The hypervisor typically caps resource usage to prevent any VM from consuming all CPU, memory, or disk space.

There are costs associated with doing this — and I’m not talking about VMware licensing!

The hypervisor virtualization layer adds overhead to support the virtualized compute and network resources, which costs some CPU power and network throughput — but in tolerable amounts. In addition, each VM contains a fairly full copy of the VM’s operating system, which consumes memory when the VM in running.

Containers use Linux OS mechanisms to run curated container images on top of a shared “container engine” and shared operating system.

With containers, there is less cost since the shared OS saves the containers from having to include that code, which makes them smaller, so they start up faster and use less host resources.

There is more to that. Containers generally are very stripped-down Linux images, excluding anything not needed to run the micro-service on them. For instance, Alpine Linux is under 6 MB in size — typical installs of Linux might run 150 MB or more. Tools like ping are likely not included. The “busybox” container image is small, but contains some such network tools, useful for learning or troubleshooting.

Container security uses Linux namespaces and cgroups.

Here’s a diagram showing this:

This is intended to suggest that one might be able to run more containers on a given host than VMs (assuming one does not have “bloated” containers that are basically just a VM).

In a sense, a container is a VM “a la carte”, where you consume just what you need. Stripping out unnecessary Linux services, etc., also reduces the security attack surface. All Good!

Whether you are running on premises or in the cloud, that has the potential to save money. As we’ll see below, that’s not the only reason DevOps is using containers.

Container Images

Container images are the code that can be run by a container orchestration system. They are immutable (unchangeable), and ephemeral (may run for short periods when needed). They are built using a manifest, pulling in a base image and then adding things to it or making changes. The image is then run by the container engine.

Containers use the “Union File System”. The manifest adds layers to a base container image. Changes to upper (newer) layers over-write (hide) changes to lower layers.

Container images inherently capture a history of the components and dependencies they are built from. This also means that the developer doesn’t have to support all the permutations of OS and libraries and supporting code that a customer might have in their environment: the container comes pre-packaged as a self-contained consistent runtime unit. Fewer bugs and support hassles!

It also avoids situations where one app needs a certain version of a library and another app needs a different version. If they’re in different containers, no problem! This speeds development by decoupling application components.

Concerning immutable: container images are typically not upgraded. They get replaced with an image containing pre-packaged changes, upgrades, or whatever. If the container orchestrator has an auto-scaling feature, it can be used to phase out the old and phase in the new images. Rollback is simple, just reverse the process.

This means consumers of a container are running a very well-defined object direct from the vendor, so there are no / minimal local variations (patch levels on the OS, variations during application installation, etc.) that can create one-off bugs. The container should behave identically across customers and platforms.

Yes, that might also be done with VM images, especially when production is in the cloud, but moving big VM images around is slow!

Interaction is also where APIs come in, as (allegedly) well-defined ways for services running in VMs or containers to interact. I say allegedly because from my perspective, API documentation is sometimes rather lacking, or even verbose but fairly useless due to lack of examples, e.g. documentation of sequences of API calls needed to perform certain tasks, etc. Also, early versions of APIs seem to change rapidly and may lack version awareness and backwards compatibility. All of which confirms, developers are humans.

Pre-built containers allow fast development and deployment. A developer can download container images supporting what they are building or deploying, rather than installing code on a server before they can get started. Of course, for production, one would hope the base container and all code installed and changes to it all took security and trust into account. A security versus convenience tension lurks there.

Another alternative is to download a base container (e.g. some Linux version) and then install tools into it, automated via a Dockerfile. As an example, if you want to explore telemetry in Cisco Nexus 9000 switches, Cisco has a Dockerfile to set up a container available with a set of tools installed. By doing it that way, Cisco doesn’t have to actually provide the container for download — and we don’t have to spin up a VM and then walk through a set of installation instructions for various packages.

The Runtime Environment allows containers to be run almost anywhere. I’ve had 20 containers running on my Mac, for instance (various store, guestbook, or web page demos of containers). People have built Kubernetes clusters out of Raspberry Pi computers.

Running containers are stateless. That means that any changes you make go away when you stop and restart the container. You would typically mount an external storage resource if you have data to read or store. If you’re used to messing around with Linux VMs, that takes some getting used to. It forces tweaks to be documented in the manifest, or configuration files on mounted storage.

What are Docker, Kubernetes, Docker Swarm?

Docker is a container engine letting you build, test, and run container images to deploy application quickly. The Docker container format was one of several initial formats, and is now part of the standard per the Open Container Initiative.

There is also a standard for container networking, Container Networking Interface (CNM is an alternative specification). We’ll go into container networking more in a separate blog.

Google Kubernetes provides a way to orchestrate running groups of containers (pods) and provide services for them. Docker Swarm is an alternative — that too is a topic for another blog.

References

Here are some links to get you started working with containers.

- Google containers introduction. Like the above material, then sales pitch.

- Docker introductory tutorial. Walks through building a container image and running it.

- NGINX free book: Container Networking from Docker to Kubernetes

- VMware book: Containers and Container Networking (2018)

- O’Reilly Book: Kubernetes Up and Running

- How to Run Kubernetes on Raspberry Pi

Comments

Comments are welcome, both in agreement or constructive disagreement about the above. I enjoy hearing from readers and carrying on deeper discussion via comments. Thanks in advance!

—————-

Hashtags: #CiscoChampion #TechFieldDay #TheNetCraftsmenWay

Twitter: @pjwelcher

NetCraftsmen Services

Did you know that NetCraftsmen does network /datacenter / security / collaboration design / design review? Or that we have deep UC&C experts on staff, including @ucguerilla? For more information, contact us at [email protected].