This blog is about SD-Access single site designs. My goal is to touch on some (but likely not all) important aspects of single-site design and provide links to good relevant Cisco documents.

Prior blogs in this series:

- What Is SD-Access and Why Do I Care?

- Navigating Around SD-Access

- Managing SD-Access Segmentation

- Securing SD-Access Traffic

The chances are that you’re doing an SD-Access single site design because:

- You’re doing Proof of Concept in a lab

- Or learning in a lab

- Or doing your first site without a lab

- Or all you have is one site, probably fairly large.

If you’re going to eventually be doing multiple sites, especially interconnected ones rather than standalone, I’d suggest trying that with two sites, and maybe “datacenter” with fusion firewall and a “core network” switches.

Pre-Requisites Checklist

Here are some things to check:

- Before you buy anything, let alone start deploying it, do your homework: make sure your design and the switches are sized properly – not just the number and speed of ports, but other scalability factors, such as sizing of CN’s, BN’s, WLC’s

- If you’re doing new equipment, you are probably ok, but you should always be in the habit of checking feature support

- If you’re planning on using older equipment, for example, re-using some gear for a lab, double-check feature support and any code upgrade limitations, using the latest Compatibility Matrix (see References, below).

Best Practice: Carefully do this homework before you buy! Better: get your Cisco partner and/or Cisco account team to review your draft BOM re ISE and DNAC, and review features/hardware mix for new and existing devices.

Reference Models

Cisco has defined some “reference models” for sites, based on size – number of endpoints, VN’s, and AP’s, along with diagrams. This provides a useful way to tell if you need to distribute the workload and get a rough idea of how much hardware.

Can the BN and CN functions be on shared devices, or do they need to be on different devices? And similarly, for WLC (wireless controller) functionality.

The section “SD-Access Site Reference Models” in the Design Guide contains some useful information:

- In general, fewer sites and larger fabrics scales better.

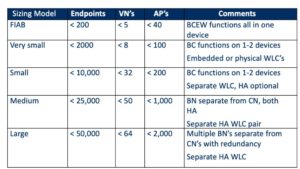

- Sizing model information (for single sites):

It provides the following table:

The Cisco design guide section goes on to discuss each of the above in-depth, with diagrams. They tend to include routers to the rest of the network and some of the services.

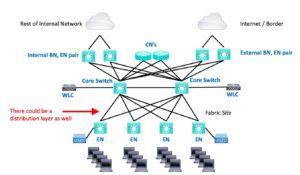

The diagrams are pretty much standard 2 or 3 tier switching architectures. What changes is how the SDA roles are spread around as the scale increase. Here is my version/summary:

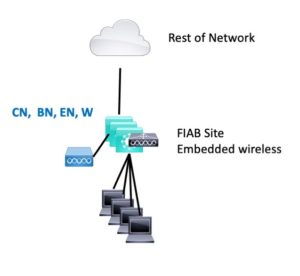

FIAB

FIAB consists of one switch or stack, connected to ROTN (Rest Of The Network), possibly by a router. WLC in the FIAB box along with EN, BN, and CN roles.

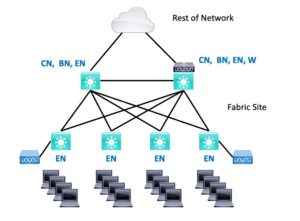

Very Small

Very Small is 1 or 2 BN’s (also CN’s and probably EN role as well), with some EN’s in closets. They can be single switches or stacks.

Generally, when possible, I prefer to go with 2 BN’s for resiliency, both acting as CN’s and probably EN’s as well. The two BN’s connect to each other, and the EN’s or EN stacks are dual-homed to the BN’s. The WLC can be embedded or physical.

Small

Small is similar, but more EN’s / stacks. Possibly larger BN’s would be needed, depending on the number of uplinks/closets. A physical, separate, dedicated WLC is recommended.

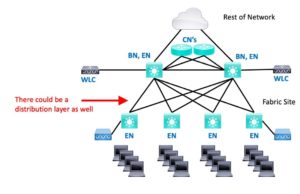

Medium

Medium, I’d personally call “pretty fair-sized.” Anyway, Medium might be a three-tier (core, distribution, access) hierarchy spread across multiple buildings or some sort of campus. The CN function is offloaded to a router or switch, off the path, connected via two BN’s.

The distribution switches (not shown) could be pure underlay, or if end-system devices connect to them, they might be assigned the EN role. (I don’t think I’ve seen this discussed in print anywhere; it makes the diagrams uglier?)

A physical separate dedicated WLC pair is recommended for a Medium site.

The Medium site probably has a services block as well (DNS, DHCP, etc.)

Large

Large is similar, but more BN’s, possibly dividing up the workload over an internal BN pair and an external BN pair. (Internal to connect to the rest of the organization, external to connect the outside world / Internet via border devices, firewalls, etc.).

You can add ISE PSN’s (Policy Service Nodes) for local scalability if desired. (After some consideration, I’m pretty sure they do not provide site WAN/core isolation survivability – I don’t think I’ve seen this discussed/documented anywhere.)

I’ll note that Cisco’s smaller site diagrams all show a fusion router at the site. You may well be able to use L3 switching on the BN’s to tie into the core or WAN if you do not need router features such as VPN and fancy QoS. Their larger site diagrams do so.

Sizing and Services

The previous section covers how the high-level design approach handles scaling. This refers primarily to network device scaling and to distribute the LISP and other workloads sufficiently.

For DNAC version 1.3.3.x there is a DNAC Scaling Document; see the References below. Use this to determine which DNAC SKU / model is needed. (As new versions come out, no doubt there will be new versions of the scaling document.)

Presumably, you have already done something similar with Cisco ISE, with the ISE Scaling document. If you’ve been doing just TACACS, you may need to beef up your ISE cluster to handle RADIUS and 802.1x. See the References below.

Criticality and high availability might be another consideration. SD-Access doesn’t work very well when your ISE is down.

As more of the Cisco value-add moves to software, we are living more and more in the application space, where we have to (a) think about scaling the server-side of things (ISE and DNAC), and (b) be careful about feature support in the software, and which hardware supports which features.

References

- Cisco Press book, Software Defined Access

- Cisco Software-Defined Access Design Guide

- Scaling ISE information (Cisco Community page)

- CiscoLive 2020 Presentation: Advanced Architect, Design and Scaling ISE (BRKSEC-3432)

- SD-Access Compatibility Matrix

- DNAC scaling document